OpenAI has launched GPT-5.4 in Standard, Thinking, and Pro variants simultaneously, and the numbers attached to the Thinking model are getting the most attention. It scored 83% on the GDPVal benchmark — a test designed to measure performance on economically valuable real-world tasks — placing it at or above human expert level in that measure.

The model also features a one-million-token context window and autonomous execution of multi-step workflows across software environments. Morgan Stanley's research report, released earlier this month, warned that this kind of leap would arrive in the first half of 2026 — and it has. X reaction from AI researchers ranges from impressed to actively alarmed.

What the New Model's 83% GDPVal Score Means for AI's Role in Business Workflows

OpenAI has also crossed $25 billion in annualized revenue and is reportedly taking early steps toward a public listing as early as late 2026. Anthropic, the company behind Claude, is approaching $19 billion in annualized revenue.

These figures confirm that the enterprise market for advanced AI models has become one of the fastest-growing sectors in technology — faster than cloud adoption at comparable stages of development. The competitive dynamic between the two companies is now intensifying across every product category from coding assistants to agentic workflows.

What This Means for Businesses Using AI Tools

The practical implication of GPT-5.4's performance is that AI systems are no longer just writing assistants — they're beginning to function as autonomous digital workers on knowledge tasks.

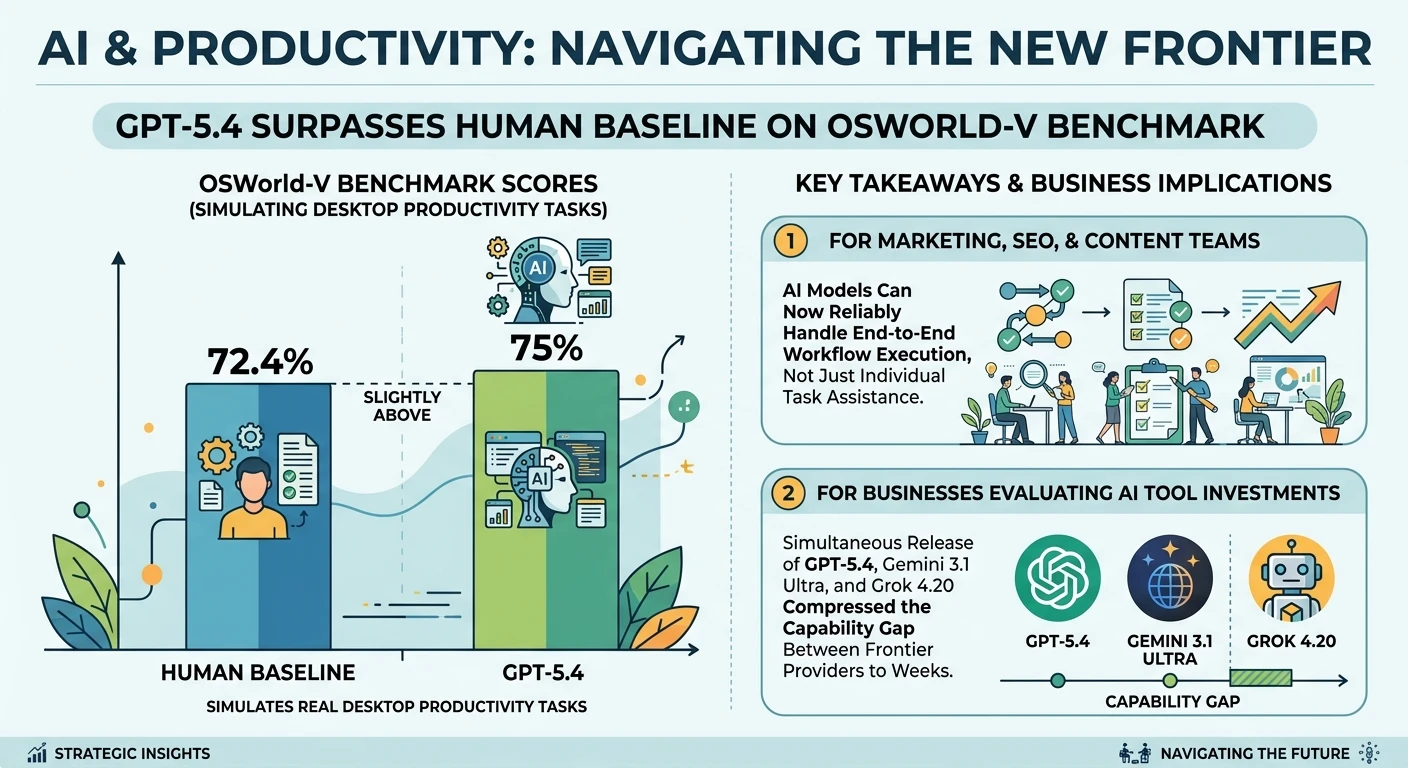

The OSWorld-V benchmark, which simulates real desktop productivity tasks, scored GPT-5.4 at 75% — slightly above the human baseline of 72.4%. For marketing, SEO, and content teams, this means AI models can now reliably handle end-to-end workflow execution, not just individual task assistance.

For businesses evaluating AI tool investments, the simultaneous release of GPT-5.4, Gemini 3.1 Ultra, and Grok 4.20 in the same month means the capability gap between frontier providers has compressed to weeks.

Reddit's r/artificial is debating which model to standardize on, and the emerging consensus is that task-specific selection — not single-model commitment — is the winning strategy. Different models excel in different domains, and enterprise AI stacks in 2026 increasingly route tasks dynamically based on model performance profiles.

Also Read